|

Pengcheng Zhu - 朱鹏程 I am a M.Sc. student at Northeastern University, Shenyang, China, supervised by Prof. Yaoming Zhuang. My current research interests focus on AI-based 3D computer vision, Visual SLAM, Neural Radiance Fields (NeRF) and 3D Gaussian Splatting (3DGS). Before this, I won 6 national awards in academic competitions during my undergraduate studies and was guaranteed a place in graduate school with distinction. I am the outstanding graduate of Henan Province in 2022 and Liaoning Province in 2025. I was also an algorithm intern at Didichuxing, focusing on Autonomous driving. |

|

PublicationsNote that some teasers are GIFs, please hover the mouse over to view the animation. |

|

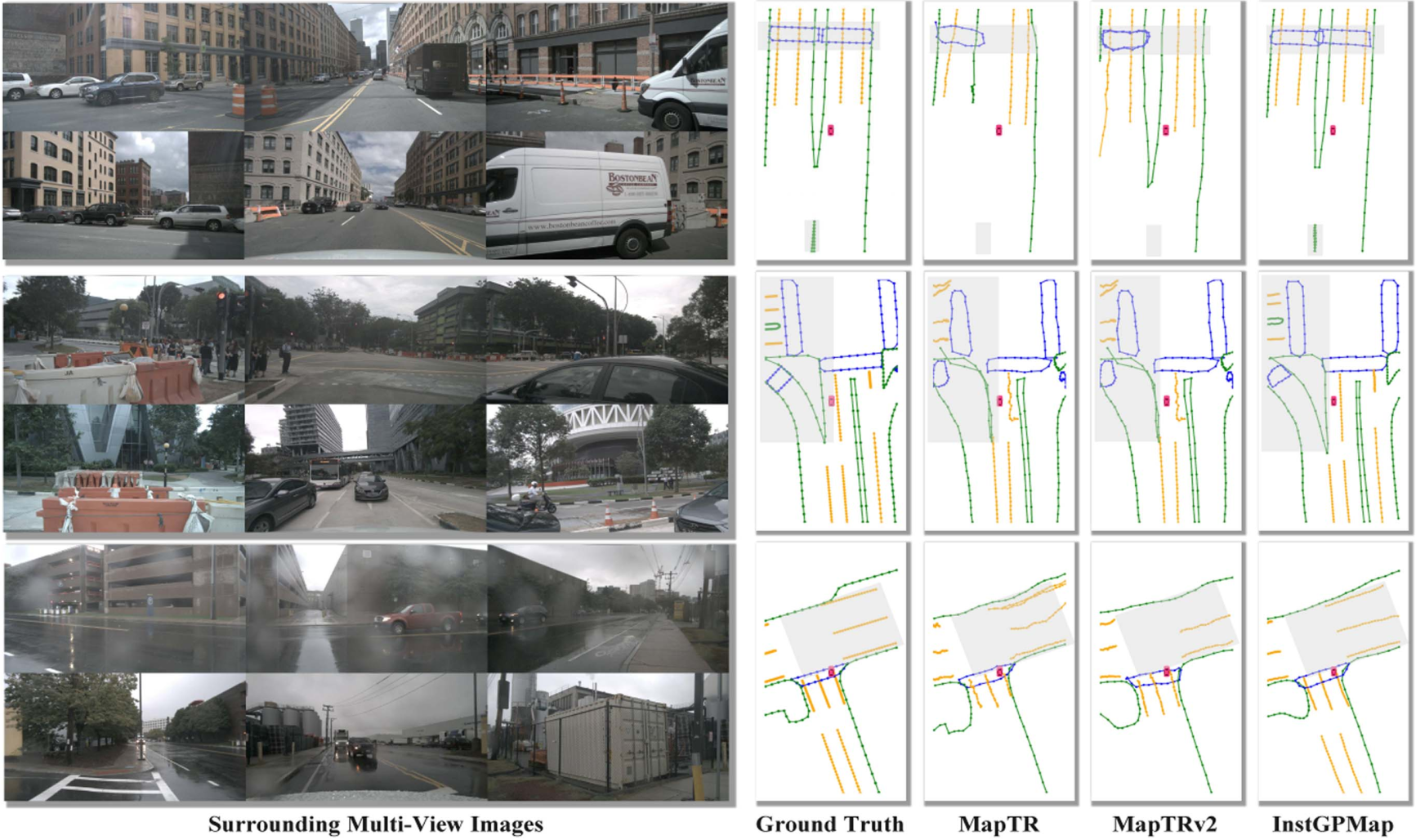

InstGPMap: Real-Time Instance-Level Global Prior Mapping via Historical Predictions Fusion

InstGPMap is an online HD map construction framework that enhances local map inference by maintaining instance-level global prior maps from historical observations. Instead of relying on implicit BEV features, it explicitly associates map elements across frames using consistent instance IDs and updates them through a GlobalMapUpdate module. Extensive experiments on the nuScenes dataset demonstrate that InstGPMap achieves state-of-the-art performance with superior accuracy and storage efficiency. |

|

BEV-PolyNet: BEV-Based Polygonal End to End Parking Slot Detection Framework

Accurate parking slot detection is vital for autonomous parking and intelligent driving. However, existing methods relying on AVM images suffer from distortion, occlusion, and fragmented corner-point regression. To overcome these issues, we propose BEV-PolyNet, an end-to-end framework that generates BEV features from surround-view images, introduces polygonal modeling to capture complete slot structures, and enhances convergence via query initialization with image features and location priors. Experiments on the PS2.0 and LPD datasets demonstrate BEV-PolyNet's superior accuracy and robustness. |

|

|

MGS-SLAM: Monocular Sparse Tracking and Gaussian Mapping with Depth Smooth Regularization

The work uniquely integrates advanced sparse visual odometry with a dense Gaussian Splatting scene representation for the first time, thereby eliminating the dependency on depth maps typical of Gaussian Splatting-based SLAM systems and enhancing tracking robustness. We have evaluated our system across various synthetic and real-world datasets. The accuracy of our pose estimation surpasses existing methods and achieves state-of-the-art performance. Additionally, it outperforms previous monocular methods in terms of novel view synthesis fidelity. |

Awards

China Robotics and Artificial Intelligence Competition National First Prize in Autonomous Driving, 2019

|

Academic Services

Journal Reviewer: RA-L

|

Contact

Pengcheng Zhu |

|

Last update: 22 Oct 2025 |